With the rapid development of AI large model training, high-performance computing and cloud computing, enterprises' demand for server GPU computing power and storage performance has shown an explosive growth trend. However, traditional server architectures have many bottlenecks in expansion capabilities, such as limited PCIe slots, difficulty in balancing GPU and SSD deployment, and lack of flexibility in expansion solutions. These problems have severely restricted business innovation. This paper will deeply analyze these industry pain points and demonstrate how LR-LINK LRSV9500-4I provides enterprises with a one-stop expansion solution through flexible X4/X8/X16 Bifurcation modes.

I. Severe Shortage of PCIe Slot Resources

1.1 Current Situation

Modern server motherboards usually provide only 4 to 8 PCIe slots, which need to meet the requirements of various peripherals such as network cards, GPUs, NVMe SSDs and RAID cards at the same time. In AI training scenarios, a single server may require 4 to 8 GPU graphics cards, plus high-speed storage devices, making the number of PCIe slots often the biggest constraint.

1.2 Business Impacts

It is difficult to deploy GPU and SSD at the same time, and trade-offs have to be made between computing power and storage

Enterprises have to purchase more servers, leading to a significant increase in TCO

Cabinet space is exhausted rapidly, resulting in low resource utilization of data centers

1.3 LRSV9500-4I Solution

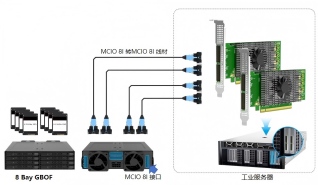

Based on the Broadcom PEX89048 PCIe Switch chip, the LRSV9500-4I expands a single PCIe GEN 5.0 x16 slot into 4 MCIO 8I interfaces. It can connect 8 NVMe SSDs in X4 mode and 2 high-end GPU graphics cards in X16 mode. Only one PCIe slot is occupied, achieving an 800% improvement in expansion efficiency.

AI training scenarios have extremely high requirements for both GPU and high-speed storage. GPUs need to process massive amounts of data, while the bandwidth and IOPS of traditional SAS/SATA storage cannot meet the demand. However, after the PCIe slots on the motherboard are occupied by GPUs, there are not enough interfaces to deploy NVMe SSD arrays.

· During large model training, the GPU computing power utilization rate is usually lower than the peak computing power. For example, the utilization rate is about 59% in a 1000-GPU cluster and about 55.2% in a 10000-GPU cluster.

· Training data reading becomes a restrictive factor, leading to longer model iteration cycles

Through the X8 hybrid mode, the LRSV9500-4I can support both GPU and NVMe SSD at the same time. For example, 2×X8 is used to connect GPUs, and the remaining 2×X8 is connected to 2 NVMe SSDs as local cache. In this way, GPUs can read data directly from high-speed local storage, improving training efficiency by 3 to 5 times.

The signal rate of the PCIe 5.0 standard reaches 32GT/s. This doubled speed means extremely strict requirements for signal integrity to ensure the accuracy and efficiency of data transmission. Long-distance transmission, inferior cables or connectors will lead to signal attenuation and increased bit error rate, and in severe cases, equipment cannot be identified or frequently disconnected.

· In the process of GPU training, if a card is disconnected, days of computing results will be lost

· Storage devices run at a reduced speed, from PCIe 5.0 down to 4.0, or even 3.0

· System instability and blue screen of death occur, thus affecting business continuity

The LRSV9500-4I adopts high-specification PCB design, high-quality connectors and signal optimization technology to ensure the stable operation of PCIe 5.0 at full rate. PCIe 5.0 technology can provide sequential read and write speeds of up to 14,000MB/s and optimal performance under correct configuration. The MCIO interface provides reliable physical connection, and with certified cables, it can effectively reduce the bit error rate and ensure 7×24-hour stable operation.

In multi-GPU training scenarios, the interconnection topology between GPUs directly affects training efficiency. Traditional solutions rely on the PCIe channels provided by the CPU, and communication between multiple cards needs to go through the CPU, which leads to limited bandwidth and high latency.

· The efficiency of distributed training is low due to insufficient communication bandwidth between GPUs

· Difficulties are encountered in large-scale cluster expansion

In X16 mode, the LRSV9500-4I enables GPUs to achieve efficient P2P communication through the Switch, effectively improving the efficiency of multi-card training.

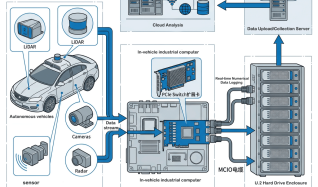

For cross-host clusters, with the help of network cards supporting RoCE v2 (RDMA over Converged Ethernet), GPUs can bypass the CPU and directly write data to the video memory of remote GPUs through the network adapter. Multiple servers are directly interconnected to achieve memory sharing and high-speed data exchange.

The pain points of server GPU and storage expansion are essentially the contradiction between limited resources and unlimited demand. Through PCIe Switch technology and flexible X4/X8/X16 Bifurcation modes, LRSV9500-4I provides enterprises with an efficient solution path. Whether for AI training, high-performance computing, big data analysis or video production, LRSV9500-4I can provide excellent expansion capabilities and investment protection.

As LR-LINK's flagship product in the PCIe 5.0 field, LRSV9500-4I, relying on the leading performance of the Broadcom PEX89048 chip and perfect ecosystem support, is becoming the preferred expansion solution for AI server and data center construction. Choosing LRSV9500-4I means choosing a flexible, efficient and future-oriented expansion architecture.