With the rapid development of artificial intelligence, AI servers and GPU clusters have become the core computing infrastructure of data centers. Ranging from large language model training to real-time inference services, these applications impose unprecedented demands on computing performance and data throughput. At the underlying architecture supporting these high-performance systems, high-speed signal transmission technology is facing severe challenges.

According to industry research institutions, the global GPU market exceeded $40 billion in 2024, with an annual growth rate of over 30%. A single AI training server can integrate 8 or more high-performance GPUs, forming a unified computing pool through high-speed interconnection. Such high-density computing architecture imposes extremely high requirements on data transmission bandwidth and signal quality inside the server.

Meanwhile, storage systems are also undergoing transformation. Traditional SATA and SAS storage can no longer meet the needs of AI workloads, and high-speed SSDs based on the NVMe protocol are becoming mainstream. The new-generation CXL (Compute Express Link) technology further elevates memory expansion and storage convergence, allowing GPUs and CPUs to access remote memory and storage resources in a cache-coherent manner.

As the mainstream standard for internal device interconnection in servers, PCI Express (PCIe) has evolved to its 5th generation and reached maturity. PCIe 5.0 increases the per-lane transmission rate from 16GT/s (PCIe 4.0) to 32GT/s, doubling the per-lane bandwidth. For graphics cards or network adapters in x16 configuration, the theoretical bidirectional bandwidth can reach 128GB/s.

However, higher transmission rates also introduce new engineering challenges:

· Signal Attenuation: High-speed signals suffer loss when transmitted through PCB traces and connectors; attenuation worsens at higher frequencies. PCIe 5.0 signals have a shorter effective transmission distance than PCIe 4.0, demanding stricter routing design.

· Signal Integrity: High-speed signals are more vulnerable to crosstalk, reflection, and noise, which may cause data transmission errors and degrade system stability.

· Timing Margin: Higher data rates mean narrower timing windows, imposing stricter requirements on clock synchronization and signal edge accuracy.

To address high-speed signal transmission challenges, Retimer technology has emerged. A Retimer is a signal regeneration device placed in the high-speed signal path, which detects, recovers, and retimes attenuated signals to extend effective transmission distance and improve signal integrity.

Unlike simple signal amplifiers (Redrivers), Retimers achieve signal regeneration through the following mechanisms:

· Signal Equalization: Compensates for high-frequency attenuation and restores signal amplitude.

· Clock and Data Recovery (CDR): Extracts the clock from the input signal to eliminate jitter.

· Signal Retiming: Regenerates clean data signals using the recovered clock.

· Protocol Transparency: Does not parse data content and is fully transparent to upper-layer protocols.

In AI servers and high-end storage systems, Retimer chips have become critical components ensuring reliable high-speed signal transmission. They play an indispensable role in interconnection between GPUs and CPUs, as well as in extended connections for NVMe SSDs.

CXL (Compute Express Link) is a new high-speed interconnection protocol based on the PCIe 5.0 physical layer but with richer functions. The CXL 2.0 standard supports three protocols:

· CXL.io: Compatible with PCIe protocols for device discovery and configuration.

· CXL.cache: Supports device cache coherence, allowing devices to share CPU cache.

· CXL.memory: Supports memory-semantic access, enabling devices to directly access system memory.

The core value of CXL technology lies in breaking the bottleneck of CPU memory in traditional architectures, allowing accelerators such as GPUs and FPGAs to access large-capacity memory pools in a cache-coherent way. This is crucial for AI training and big data applications requiring massive memory.

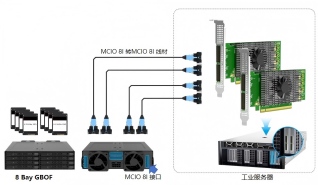

MCIO (Mini Cool Edge IO) is a compact high-speed connector standard designed for next-generation PCIe and CXL applications. MCIO offers the following advantages:

· Higher Density: Supports more signal channels in a smaller space.

· Better Signal Integrity: Optimized pin layout and shielding design reduce crosstalk.

· Cable Connection: Supports external device connection via cables, breaking chassis space limitations.

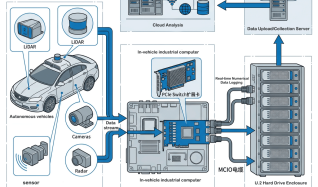

Training large AI models requires collaboration of hundreds or even thousands of GPUs. High-speed interconnection ensures low-latency, high-bandwidth exchange of gradient data and model parameters between GPUs. Retimer technology guarantees signal integrity across complex backplanes and long-distance cables.

HPC applications such as scientific computing, simulation, and gene sequencing have extremely high demands for memory bandwidth and capacity. CXL memory expansion combined with Retimer signal enhancement can build large-capacity, high-bandwidth memory pools to accelerate computing tasks.

Cloud gaming servers virtualize multiple GPU instances on a single physical machine to provide real-time rendering services for different users. High-speed storage and memory access are critical to ensuring low-latency gaming experiences.

Software-defined storage (SDS) solutions based on standard servers need to connect a large number of NVMe SSDs. PCIe 5.0 Retimer expansion cards enable high-density SSD expansion to build high-performance storage pools.

Facing increasingly complex high-speed interconnection demands, system designers should consider the following factors:

· Transmission Distance: Evaluate the physical distance signals need to travel to determine whether Retimer enhancement is required.

· Lane Configuration: Select appropriate PCIe bifurcation modes (x16/x8/x4) based on device requirements.

· Protocol Support: Confirm whether CXL protocol support is needed and the specific functional requirements of CXL.

· Thermal Design: High-speed Retimer chips have relatively high power consumption and require proper thermal solutions.

· Compatibility Verification: Ensure the expansion card is compatible with motherboards, operating systems, and target devices.

The advent of the AI era is reshaping data center architecture design. From high-speed transmission of PCIe 5.0, signal regeneration of Retimer technology, to memory expansion of the CXL protocol, each technology supports the unlocking of AI computing potential.

For enterprises planning AI infrastructure, understanding the principles and application scenarios of these underlying technologies helps make more rational technology selections and build high-performance, high-reliability computing platforms.

Linkreal (LR-LINK) is a national high-tech enterprise focusing on server/data center connectivity solutions. Its product portfolio includes Ethernet network adapters, storage expansion cards, GPU expansion solutions, etc. Keeping pace with the development trends of PCIe 5.0 and CXL technologies, the company provides high-speed signal expansion solutions for AI servers, high-performance computing, software-defined storage, and other application scenarios.